|

Zheng Wang

I am a first-year PhD student in

Computer Science at the

University of Illinois Urbana-Champaign,

advised by Prof. Minjia Zhang.

My research focuses on efficient and interpretable foundation models. Specifically:

Prior to UIUC, I was fortunate to be advised by Prof. Yingyan (Celine) Lin at the EIC Lab as a Research Assistant in the School of Computer Science, Georgia Tech. Outside of research, I like to stay healthy by working out regularly, and I'm really into playing 🎾 tennis 🎾 — it keeps me both physically and mentally sharp. |

|

News[05/2026] I was selected as "Gold Reviewer" by ICML 2026. [04/2026] PuzzleMoE is accepted to ICML 2026. [01/2026] Two papers accepted to ICLR 2026 (Slow Fast Policy Optimization; Universal Position Interpolation). Congratulations to all collaborators. [01/2026] One paper accepted to ACL 2026 (Hidden States as Early Signals). [01/2026] One paper accepted to EACL 2026 (Think Hard Only When Needed). [09/2025] ORCHES accepted to MICRO 2025. [08/2025] Started my PhD at UIUC with Prof. Minjia Zhang! [05/2025] One paper accepted to ACL 2025 (LAMB). [07/2024] KVMerger released on arXiv. [05/2024] Two papers accepted to ICML 2024 (Attention Calibration for LLMs, Linearized-LLM). [05/2024] One paper accepted to DAC 2024 (EDGE-LLM). |

Publications(* denotes equal contribution) |

|

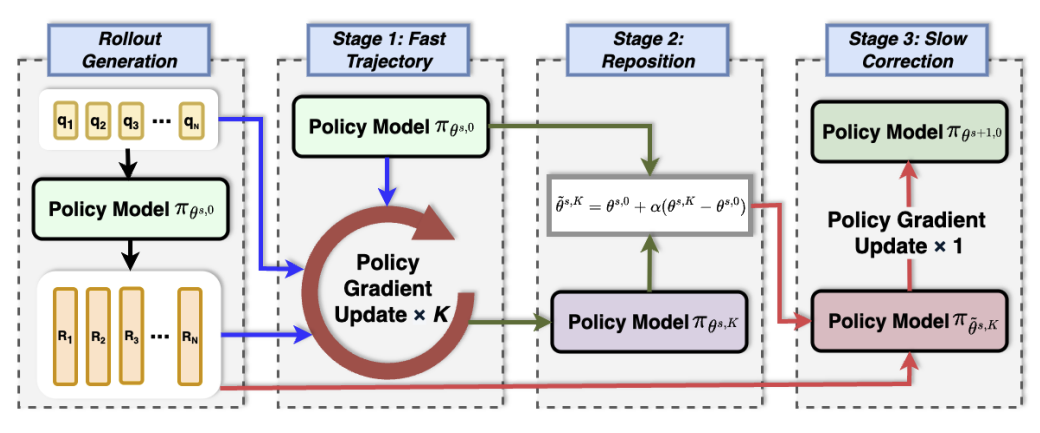

Slow-Fast Policy Optimization: Reposition-Before-Update for LLM Reasoning ICLR 2026 |

|

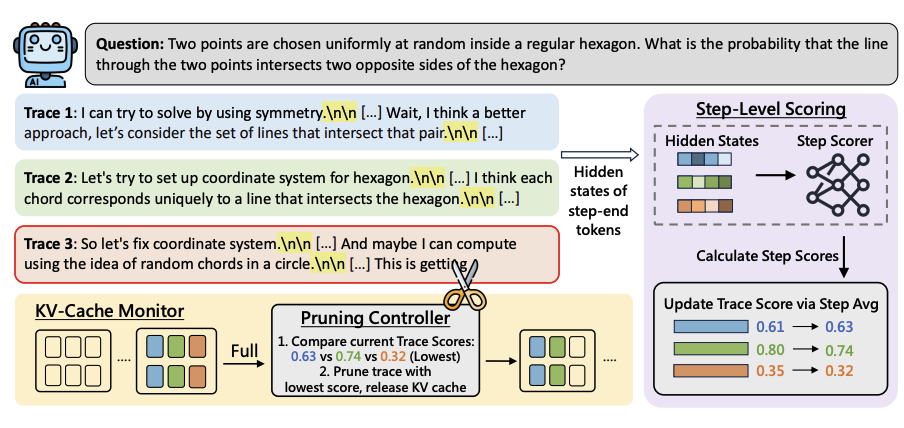

Hidden States as Early Signals: Step-level Trace Evaluation and Pruning for Efficient Test-Time Scaling ACL Findings, 2026 |

|

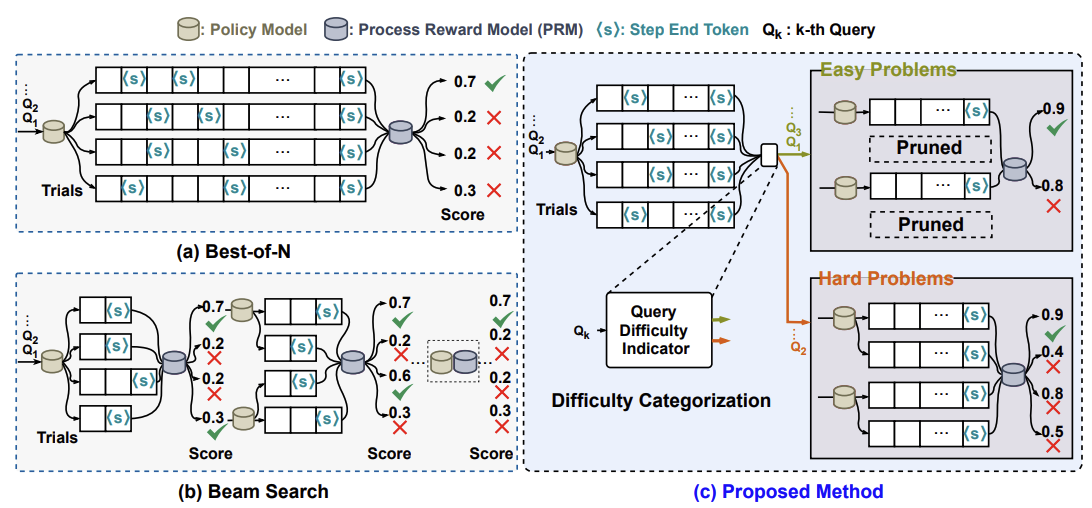

Think Hard Only When Needed: A Hybrid Best-of-N and Beam Search for Efficient Test-Time Compute EACL 2026 [Paper] |

|

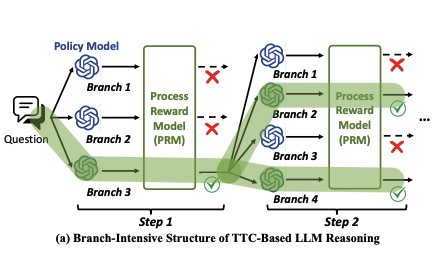

ORCHES: Orchestrated Test-Time-Compute-based LLM Reasoning on Collaborative GPU–PIM Heterogeneous System MICRO 2025 [Paper] |

|

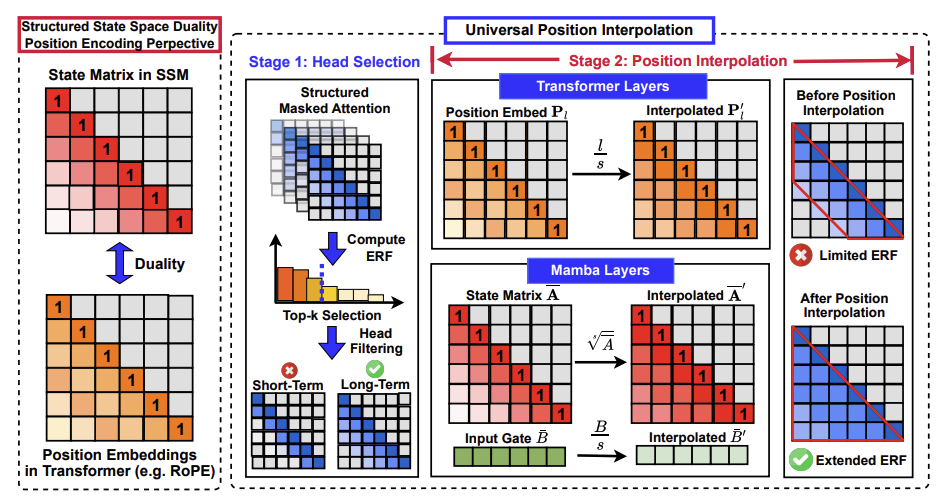

From Collapse to Control: Understanding and Extending Context Length in Emerging Hybrid Models via Universal Position Interpolation ICLR 2026 [Paper] |

|

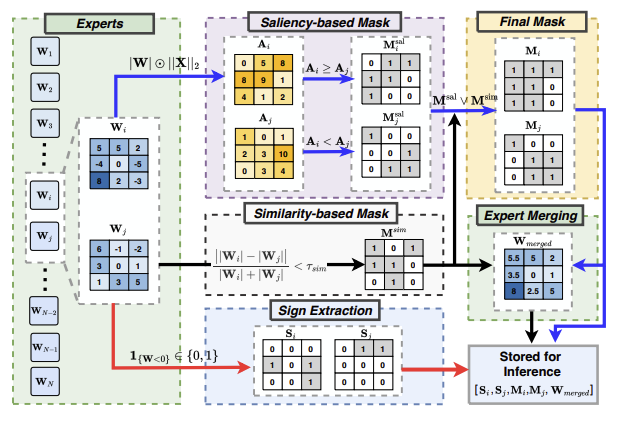

PuzzleMoE: Efficient Compression of Large Mixture-of-Experts Models via Sparse Expert Merging and Bit-packed Inference ICML 2026 [Paper] |

|

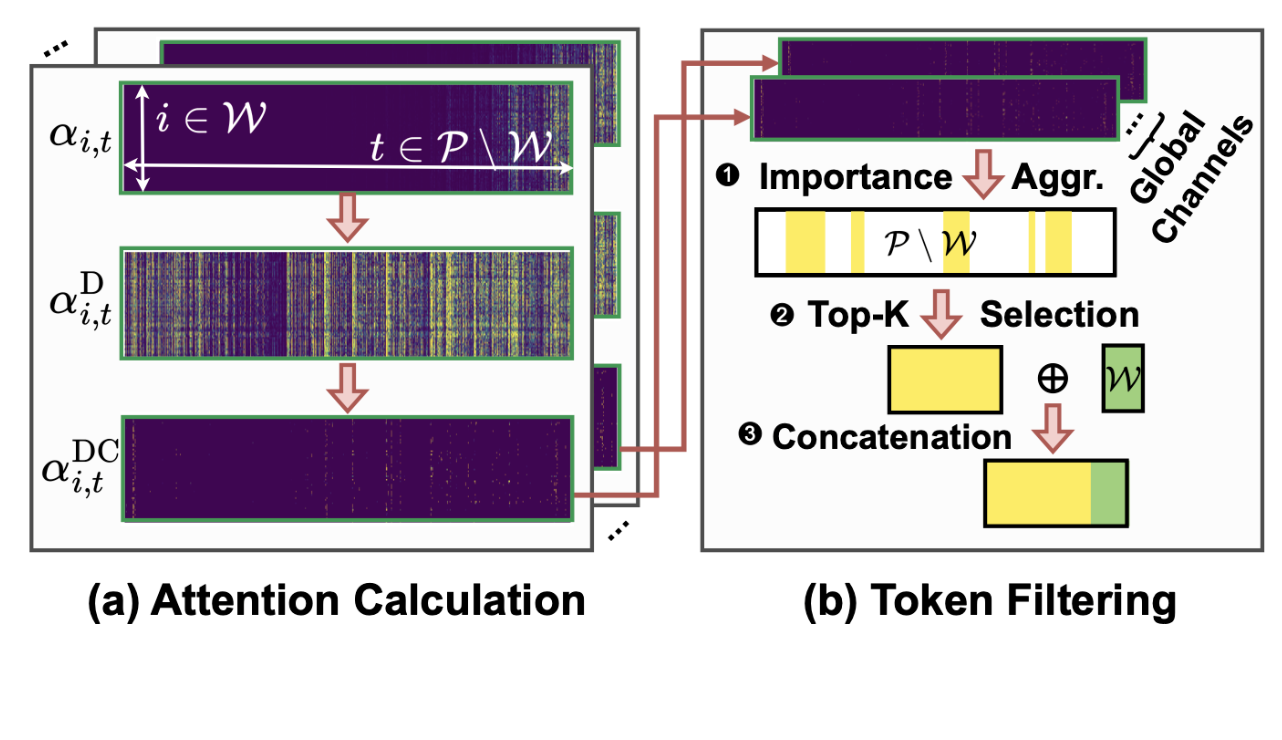

LAMB: A Training-Free Method to Enhance the Long-Context Understanding of SSMs via Attention-Guided Token Filtering ACL 2025 [Paper] |

|

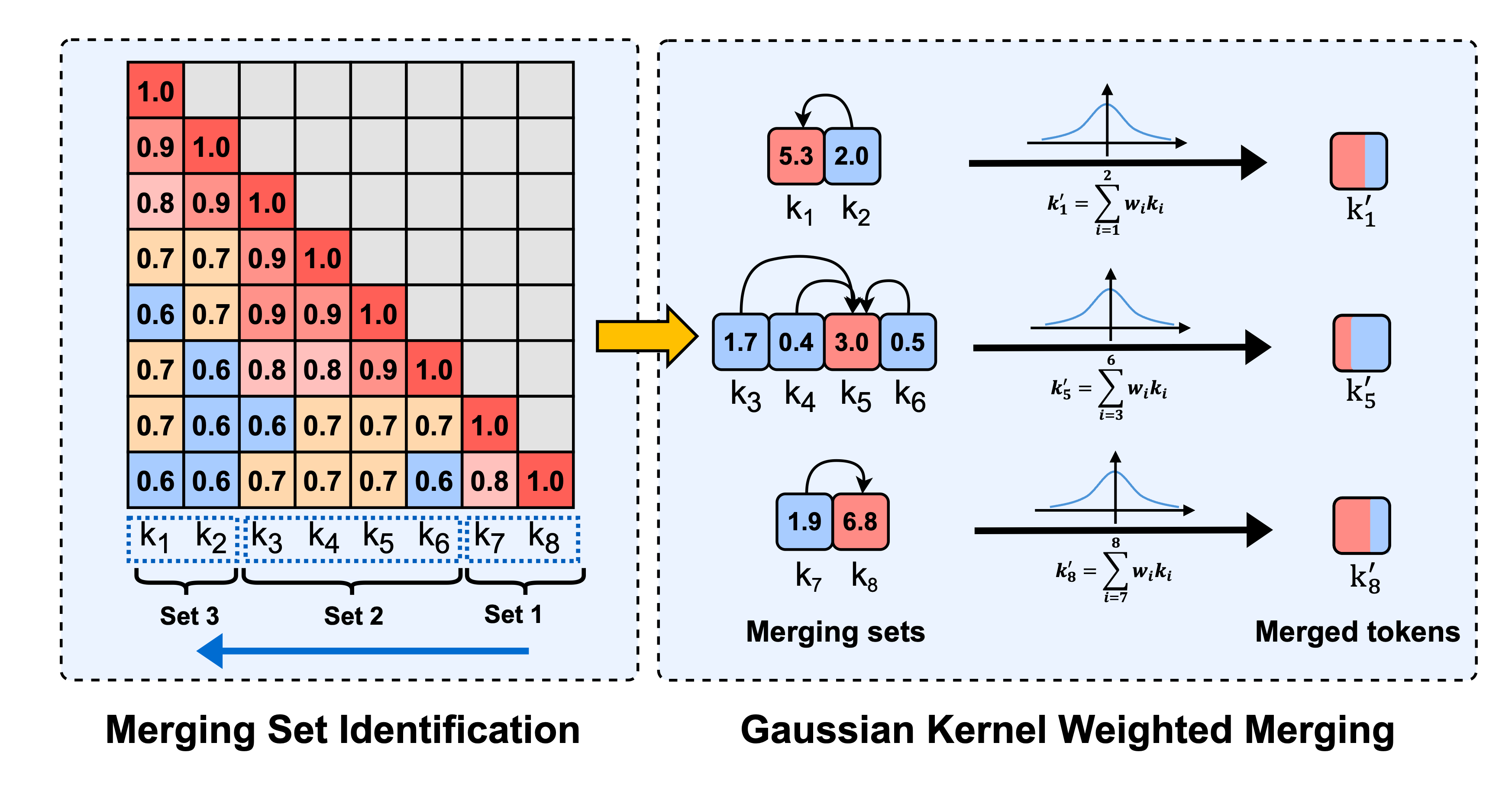

Model Tells You Where to Merge: Adaptive KV Cache Merging for LLMs on Long-Context Tasks arXiv preprint, 2024 [Paper] |

|

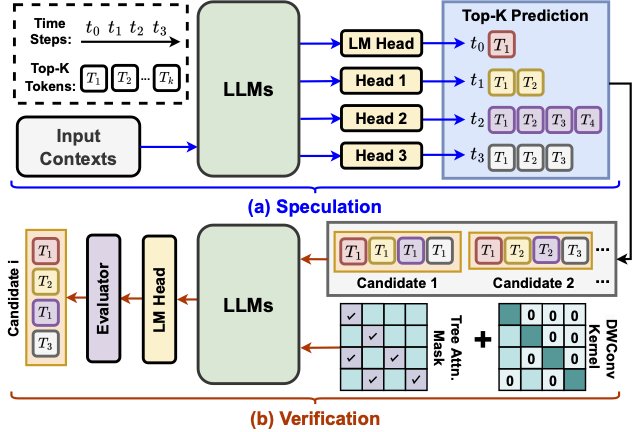

When Linear Attention Meets Autoregressive Decoding: Towards More Effective and Efficient Linearized Large Language Models ICML 2024 |

|

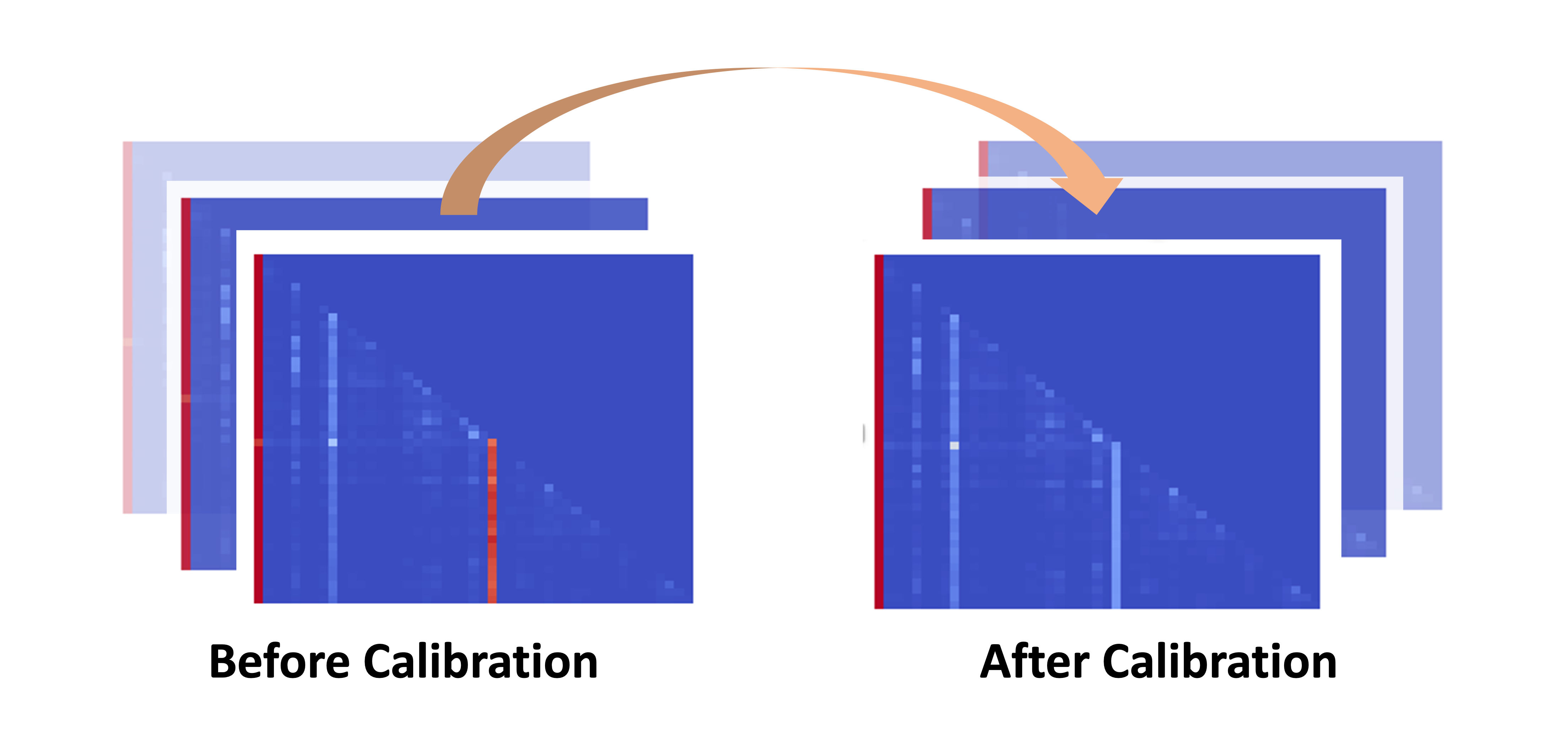

Unveiling and Harnessing Hidden Attention Sinks: Enhancing Large Language Models without Training through Attention Calibration ICML 2024 |

|

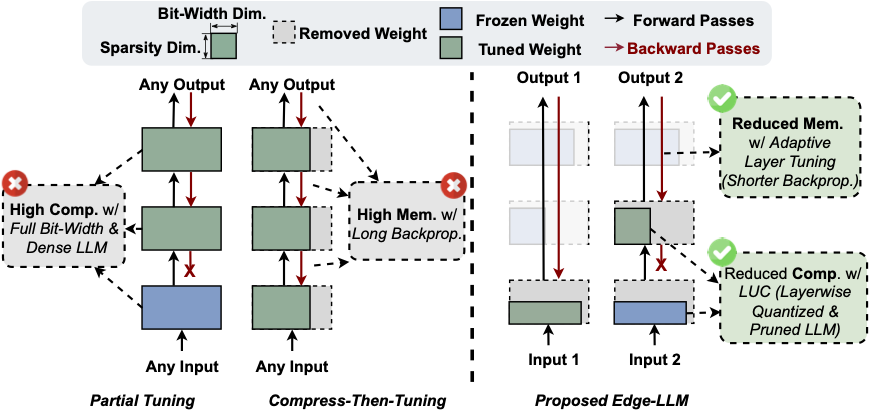

EDGE-LLM: Enabling Efficient Large Language Model Adaptation on Edge Devices via Layerwise Unified Compression and Adaptive Layer Tuning & Voting DAC 2024 |

Teaching

|

Services

|

Selected Awards

|

|

Design and source code from jonbarron. Layout inspired by Yonggan Fu. |